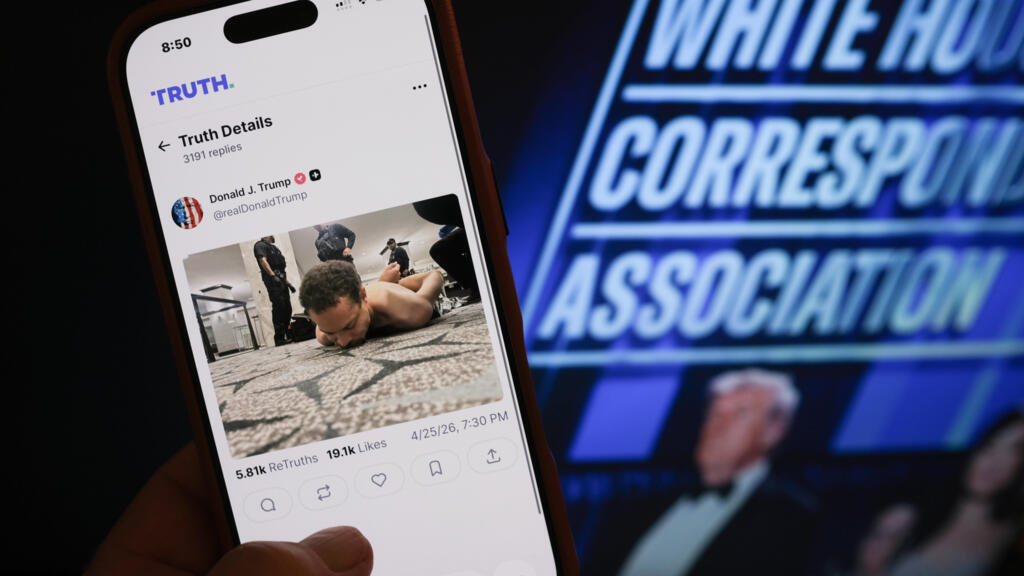

Social media platforms have been flooded with low-effort, AI-generated misinformation following the attempted assassination of President Donald Trump at a Washington gala.

Within hours of authorities identifying 31-year-old Cole Tomas Allen as the suspect, dozens of fabricated images surfaced, falsely linking him to a wide array of public figures.

These AI “fakes” depict Allen as a former employee or associate of celebrities like Taylor Swift and Tom Hanks, as well as political figures such as Barack Obama.

Experts refer to this surge of synthetic content as “AI slop,” noting that advancements in generative technology now allow bad actors to create convincing fakes using only a single reference photo.

This represents a significant shift from previous years, when high-quality manipulations required a large library of images to train the AI.

Digital literacy specialists warn that content farms are mass-producing these images to exploit the algorithms that prioritise viral engagement, regardless of accuracy.

The volume of these fabrications has raised serious concerns about the long-term impact on public perception.

Researchers fear that the sheer quantity of “nonsense” following major world events could lead to “truth decay,” where social media users eventually stop trying to distinguish between authentic photos and AI-generated fabrications.

Despite pleas from journalists and victims whose likenesses have been used in these posts, the profitability of viral AI content suggests this trend is unlikely to slow down.

Trending

Trending